Creating Systems that can Think, Feel -- and Smell

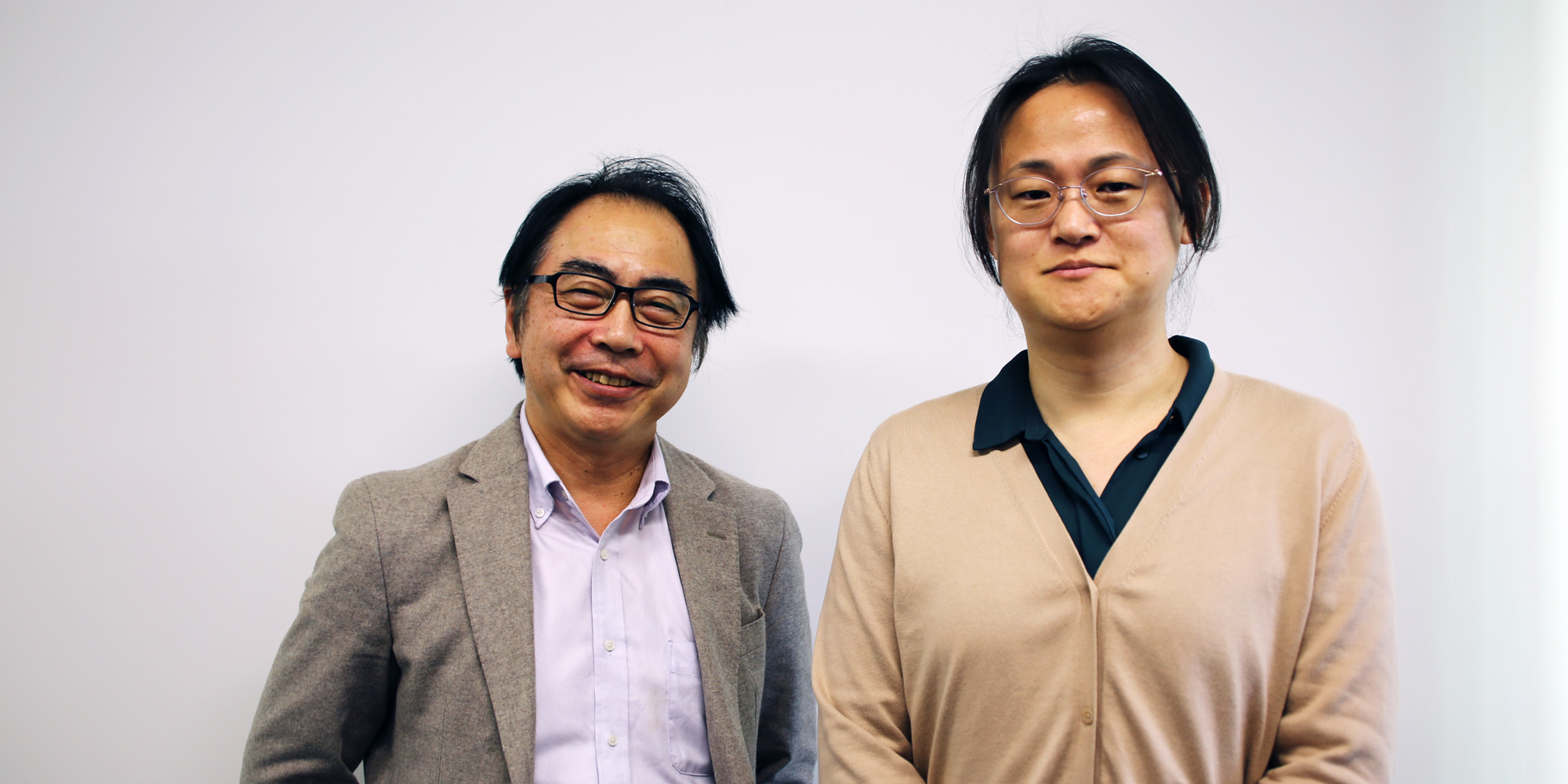

Tomonobu Nakayama

MANA Principal Investigator, WPI-MANA

Genki Yoshikawa

Group Leader of Nanomechanical Sensing Group, WPI-MANA

MANA researchers are pursuing innovative technologies that mimic the functions of the human brain.

Thinking and perception -- the hallmarks of an artificially intelligent system -- are the focus of intense research at MANA, and it is producing exciting results, as well as a host of promising applications.

Two MANA researchers, Tomonobu Nakayama and Genki Yoshikawa, are engaged in work that uses nanotechnology to achieve performance that approaches that of our own biological systems. Dr. Nakayama is developing a nanoarchitectonics network that exhibits emergent cognition -- an artificial brain -- and Dr. Yoshikawa is developing a nano-perceptive olfactory system -- an artificial nose.

Thinking and sensing

Nakayama and his team are using the tools of nanotechnology to create synthetic neural networks that can “think” and “learn,” which could result in novel memory devices.

“Nanotechnology is quite important in integrating nano-functionality into a system,” Nakayama noted. "And in this case, the sensing and cognitive parts do not rely on software. Probably the type or speed of mechanical motion also imparts cognitive information.”

Brain-like behavior cannot be achieved only by making everything accurate and precise -- there is more to cognition and learning than merely flipping switches on and off. “So we need to think about the relationship between the fluctuation or speed of the action and how to include a variety of uncontrolled natural factors,” Nakayama said.

The team formed their “neuromorphic network” by integrating numerous silver nanowires covered with a polymer insulating layer about 1 nm in thickness. Each junction between two nanowires forms a variable resistive element -- a synaptic element -- which behaves like a neuronal synapse. The resulting structure is like a kitchen scrub made of entangled wire, containing many contacts between the wires.

When it's electrically stimulated, a huge number of junctions interact with each other to achieve the best routing through the network. The electrical signals can exploit multiple transport pathways across the network and spontaneously adapt to changing transmission routes. This process leads to emergent brain-like behavior resembling cognitive functions such as learning, memorization, forgetting, becoming alert and returning to calm.

“In our case, we are not really controlling the kind of contact -- it forms naturally,” Nakayama noted. As a result of that natural formation, the properties of each junction are similar but not exactly the same. They have what is known as “memristive,” or synaptic properties.

“We want to incorporate this sort of memristive device into computer architecture and realize a new form of ‘neuromorphic’ computing,” he said.

Tomonobu Nakayama (MANA Principal Investigator, WPI-MANA)

The brain is often compared with a computer, but in terms of cognition and recognition, the actual performance of the brain is not really computer-like, Nakayama noted. In conventional computers, everything works according to design. The user inputs a command, and all the transistors and other components do what they’re supposed to. Once the best routing is established, it is registered as a pathway, and does not change.

Nakayama’s network system takes a different approach. “It tries to memorize a better route from one point to another through the network, but we do not control any of the junctions,” he said. “We just give it input, and the network automatically optimizes the routing.”

The routing continues to fluctuate in response to stimuli, and sometimes switches to alternative pathways. “So ‘optimized’ pathways do not always stay ‘optimal.’ They can change in reaction to external stresses,” he said. “Because of this, we don't say this network provides artificial intelligence,” he continued. “We say it's a kind of specific intelligence. It's not, strictly speaking, artificial.”

The team has already made a memory device and computer simulations to show that such a network can be used for character recognition. That kind of task can be done with AI, using computers, but “the difference in this case is that we don't need a computer program, and all the control is done naturally,” Nakayama said.

Detecting and perceiving

Networks that alter and reconsider their decisions need stimuli from the world around them to provide meaningful input.

For example, there’s more to a sense of smell than just detecting chemicals in the air around us. We are constantly bombarded by olfactory data, as we detect aromas and compare them to our memories of similar smells to identify them based on past experience. Smell is as much intuition as it is chemical detection.

Yoshikawa is developing an olfactory sensor, a sort of electronic nose, which can identify odors based on both hard data and learning and memory. His sensor combines molecule detection and recognition to make a kind of cognitive sensor.

“A conventional gas sensor gives a one-dimensional signal -- just one number, for example the percentage of oxygen or CO2,” he said. “An olfactory sensor, on the other hand, gives you information about what that smell is. It can measure complicated mixtures of various gases, and once it learns a smell, it remembers how they reacted together.”

This is similar to how a biological nose works. “The first time we smell coffee, for example, we remember that sensation and link it to coffee. The next time we smell it, we remember the sensation and identify it as coffee.”

Genki Yoshikawa (Group Leader of Nanomechanical Sensing Group, WPI-MANA)

Yoshikawa’s device is based on a Membrane-type Surface stress Sensor (MSS), a very sensitive and compact nanomechanical sensor, developed at MANA, capable of detecting a wide range of substances, including gaseous molecules and biomolecules such as DNA and proteins.

When the gas molecules come into contact with the MSS, it experiences mechanical deformation or stress in the nanometer range. The sensor can identify the absorbed molecules by analyzing the degree of deformation based on changes in electrical resistance.

“It is difficult to observe that tiny mechanical deformation, but the sensor can detect it and transduce it into an easy-to-read electrical signal,” Yoshikawa said. The results are then analyzed and identified with the help of AI and a huge, and growing, library of olfactory data.

“We perceive smells with intuition as much as with hard data when we are detecting or identifying smells. There is still no rational or logical way of thinking when we smell,” he said.

Wide applications

Because of its ability to detect such a wide range of substances, the potential applications of such a device could be widespread. Practical olfactory sensors could make a massive difference in a wide variety of fields, including agriculture, environmental sensing, food management, medicine and criminal investigation.

One dream is breath diagnostics -- using an olfactory device to test for cancer and other diseases by analyzing a patient’s exhaled breath. Preliminary results have been positive in differentiating the breath of cancer patients and healthy persons.

The olfactory device could yield basic scientific breakthroughs as well. “One of our goals is to illustrate the mechanism of the nose,” he said. “The human nose is super-sensitive -- it can detect parts per trillion. It has about 400 different types of receptors, so the number of odors we can differentiate is almost infinite.”

“And still, nobody knows the exact mechanism of how our nose can detect such small concentrations.”

New ways of thinking

Because they mimic the workings and natural efficiency of biological cognition, and the inner workings of the brain, future devices based on these MANA researchers’ technologies could demonstrate flexibility, speed and energy efficiency similar to the brain’s own processing.

These systems can handle tasks in ways that are closer to how human beings operate. Instead of merely enhancing or reprogramming existing computers, they point the way toward an entirely new way of computing -- and major advances in machine learning and AI.

#####

Tomonobu Nakayama (left) and Genki Yoshikawa (right)

Further information

"Brain-like Functions Emerging in a Metallic Nanowire Network"

https://www.nims.go.jp/mana/news_room/press/2019111101.html

Nanomechanical Sensors Lab.

http://y-genki.net/

WPI-MANA

https://www.nims.go.jp/mana/